Microsoft Research335 тыс

Опубликовано 15 сентября 2021, 18:44

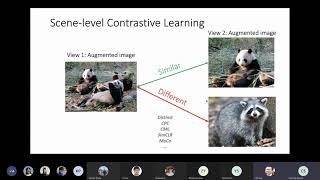

Multi-modal data provides an exciting opportunity to train grounded generative models that synthesize images consistent with real world phenomena. In this talk, I will share several of our recent efforts towards creating grounded visual generation models: (1) introducing user attention grounding for text-to-image synthesis, (2) improving text-to-image generation results with stronger language grounding, and (3) taking steps towards creating spatially grounded world models for embodied vision-and-language tasks.

Speaker: Jing Yu Koh, Google

MSR Deep Learning team: microsoft.com/en-us/research/g...

Speaker: Jing Yu Koh, Google

MSR Deep Learning team: microsoft.com/en-us/research/g...

Свежие видео

The Era of Generative AI in Personal Computing, Adrian Macias,AMD Sr. Director AI Product Management

Случайные видео