Microsoft Research335 тыс

Опубликовано 22 июля 2019, 18:02

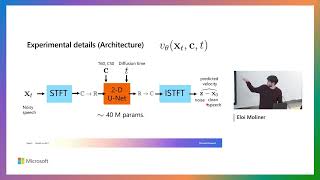

The success of deep convolutional architectures is often attributed in part to their ability to learn multiscale and invariant representations of natural signals. However, a precise study of these properties and how they affect learning guarantees is still missing. In this talk, we consider deep convolutional representations of signals; we study their invariance to translations and to more general groups of transformations, their stability to the action of diffeomorphisms, and their ability to preserve signal information. This analysis is carried by introducing a multilayer kernel based on convolutional kernel networks and by studying the geometry induced by the kernel mapping. We then characterize the corresponding reproducing kernel Hilbert space (RKHS), showing that it contains a large class of convolutional neural networks with smooth activation functions. This analysis allows us to separate data representation from learning, and to provide a canonical measure of model complexity, the RKHS norm, which controls both stability and generalization of any learned model. This theory also leads to new practical regularization strategies for deep learning that are effective when learning on small datasets, or to obtain adversarially robust models.

See more at microsoft.com/en-us/research/v...

See more at microsoft.com/en-us/research/v...

Свежие видео

@disneyanimation 's #Moana2 is a 2024 Breakout Search. It went beyond. Now playing only in theaters.

New Way Now Sundogs rises to creative challenges for global clients with Gemini for Google Workspace